Building a high quality product takes time and effort. But as hard as it is to build it, it’s even harder to maintain that quality throughout the years.

We work very hard to maintain the quality of Beanstalk and Postmark with a beautiful web interface and powerful, easy to use features.

Powerful and easy is easier said than done. It takes a huge amount of effort to maintain the right balance between great design, number of features and simplicity.

But.. that is what you, the customers, should see when you sign in to your Beanstalk account: a simple and easy to use application, which has a powerful feature set, but yet, is not complicated to use. You rely on Beanstalk everyday, and as long as you are happy we are happy too.

Behind Beanstalk, there is a huge amount of work, and I would like to give you a quick tour on how we work on features to make sure you love the result. A good example is how we built the new Beanstalk deployments.

We take all of the steps

In order to build, actually rebuild and improve, something like Beanstalk deployments the Wildbit team takes time. It’s a process which involves many steps. Some of them include:

- discussing design improvements

- designing the feature

- sharing the designs with developers

- developing the new feature

- sharing the feature to testers on first review

- tester, designer and developer working closely together on polishing the feature until it’s ready

- approving the feature by the tester for release

- developer and tester testing migration from old feature to new feature

- performance testing the feature on staging

- releasing the feature, and making sure to document and announce it correctly

- watching performance of the new feature on production

- supporting the new feature by helping customers and fixing new bugs found on the spot

That sounds like a LOT, right? In order to have a high quality feature and product people can rely on, we need to go through all the steps mentioned above, and sometimes even more.

Our process begins with design

It all starts with discussing how to improve the design of the feature. Design discussion is lead by Eugene , Chris and Natalie , and all team members can participate. This discussion is one of the key elements for the new feature to succeed. If the wrong path is taken for design improvement, the new feature will cause problems for customers.

Thanks to Eugene, the discussion gets transformed to new design files in full HTML prototypes, and after approval, development of the new feature begins.

Development is more than implementing designs

As a software tester I always admired exceptional developers like the ones working at Wildbit. Nothing is more satisfying for me than to work with a developer who cares about his work and willing to collaborate with me on pursuing the goal: releasing a feature with the smallest number of bugs.

Soon after development begins, we can actually see and use the new feature in our staging environment. But, that is just a beginning of the long road to get the feature, deployments in this case, working smooth and correctly.

New features are implemented one by one. As they are tested and approved by developers, they are assigned to me for the next round of checks.

Tools help solve the challenges of thorough testing

When big features like deployments are assigned to me I usually test section by section. There is a lot of back and forth communication with developers due to the variety of sections needing to be checked.

Some might think that testing improvements to existing features is easier to test and release. It’s actually opposite, since I need to:

- check if the new feature works

- check if ALL old features are preserved (regression testing)

- check whether features are as easy to understand for users as before the improvements

- do a migration check

- check whether performance is the same as before the feature was released

When testing a new feature, I usually only need to do step 1.

When reviewing improved features, I first check whether features work at all, and then I would go into further details per feature, always focusing on one section, but balancing testing in order to test sections equally. Testing the features is a combination of manual testing (small tools like Firebug help greatly), running pre-written Ruby scripts and automated testing. What helps reviewing improved features is automated testing. Automated tests help A LOT.

Ruby scripts allow me to quickly populate repository data in Beanstalk and test with a large number of deployments in a short period of time.

I use a variety of automated tests written in ruby (RSpec) using selenium to check whether existing features are not broken. To make tests easily executable, I use Jenkins. I will share more details on how we use automated tests, reviewing performance and how other testing tools are used in one of the following articles about testing.

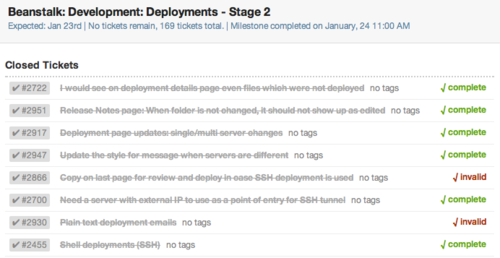

As testing goes into more details, we would all suggest adjustments in discussion, to improve the look and feel of the application. As we go more and more into the details, the number of small tickets in the ticketing system increase, and the number of features finished and approved will grow.

After approving the entire feature set, deployments are almost ready to be released to production.

When the feature will be released, automated tests will run on a production test account to make sure it works correctly. Our automated tests are improved and enriched with new test cases constantly.

Planning for seamless migrations

After finishing work on a feature improvement, a key part of releasing the feature is to do a migration test. You must be sure that the feature you have improved will work correctly for old and new users and that everyone can use it continuously without any problems. When deployments were ready for release, Dima did a migration check and I helped him a bit on finalizing the release.

Don’t forget the documentation

When a feature like new deployments is released, you have to make sure customers know about it, and not take it by surprise. Alex and Natalie make sure every feature at Wildbit is properly documented and announced to customers.

Don’t just release it, support it

When all the work is done, and you are ready for that glass of champaign after some hard work, you have to make sure to support the feature that you have released. After the release, there will be bugs and suggestions.

We always make sure to take suggestions into account and fix bugs on the spot. That is the only way to make features long lasting.

The new deployments update is one of the biggest recent improvements we’ve made to Beanstalk. It opens a window to variety of new features in the future.

To make this update more than 200 tickets were resolved and 15% of the Beanstalk interface was redesigned to support it. While we did have to iron out some post production bugs, the release was considered a success.

It’s all worth it

In order to build a high quality application like Beanstalk, we go through all the steps I mentioned. Skipping any of the steps leads to sloppy releases and a messy post production process to clean up bugs.

As we can see building a high quality feature takes a lot of effort, but in the end it is worth it and our customers appreciate it.