Over the past couple weeks I've been running a number of benchmarks to get a comparison between existing SSD-based storage and NVMe flash storage in an attempt to understand capacity improvements. The project began as we started to hit the disk IO limits of our existing MySQL servers for Postmark. It became abundantly clear that we were IO bound and needed to look into better performing servers to replace our older systems.

A new storage technology has become available recently called NVMe (Non-Volatile Memory Express) that combines the efficiency & speed of non-volatile flash storage similar to what is found with FusionIO & similar devices with the form-factor of traditional SSDs. NVMe replaces the traditional SATA or SAS bus with a PCIe bus that takes advantage of flash storage's ability to handle parallel transactions & other capabilities that mechanical hard drives are incapable of dealing with. This has the potential to improve performance & throughput significantly. We wanted to find out just how much of an improvement we could expect. Our results were somewhat surprising.

Configurations

During testing we worked with four distinct configurations for comparison. The configurations aren't exactly ideal, but they were sufficient to give us an idea of what to expect:

- Current production - 4x SATA SSDs in RAID10 behind an LSI 8260-4i with a BBU configured with write back caching. 70GB RAM, 8-cores. CentOS 6.6.

- New system w/ SSD - 6x SATA SSDs in RAID10 using Linux mdadm. 128GB RAM, 24-cores. CentOS 6.6.

- New system w/ Intel P3500 NVMe - single drive*. 128GB RAM, 24-cores. CentOS 6.6.

- New system w/ Intel 750 Series NVMe - single drive*. 128GB RAM, 24-cores. CentOS 6.6.

* The server only has two NVMe drive bays so production would be running in RAID1. The server was configured with a single P3500 & a single 750 Series at the same time for comparative testing convenience.

Tests

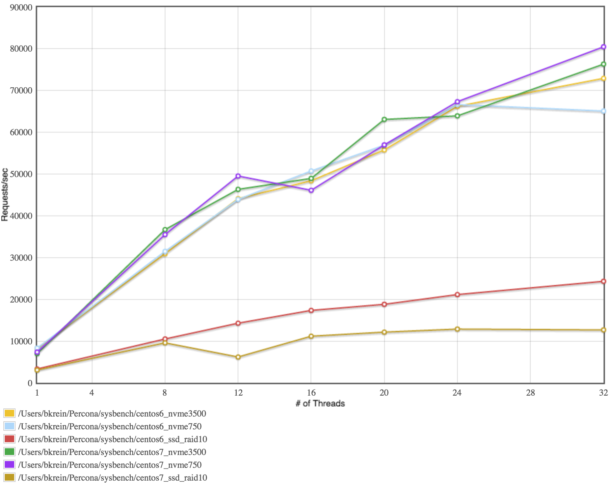

We're using Sysbench to do our benchmarking tests which was recommended to us by Percona who provides MySQL consulting for us.

Sysbench was run on all four configurations with the following options:

| No. Threads | File Size | Block Size | Mode |

|---|---|---|---|

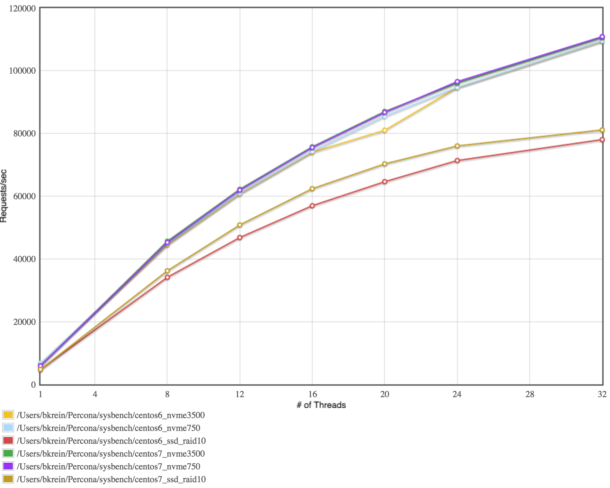

| 1, 8, 12, 16, 20, 24, 32 | 40GB | 16k | Random Reads |

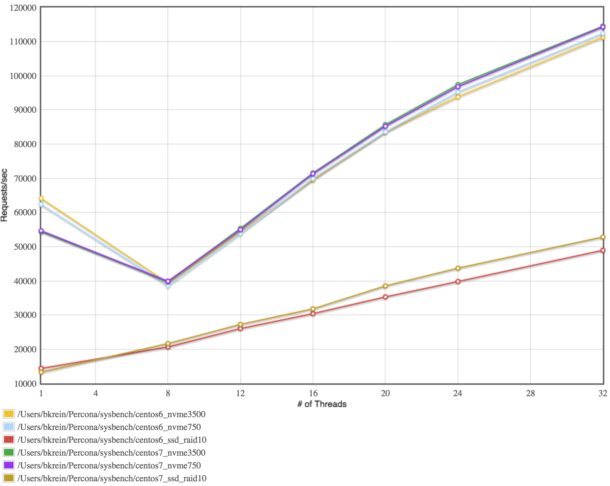

| 1, 8, 12, 16, 20, 24, 32 | 40GB | 16k | Sequential Reads |

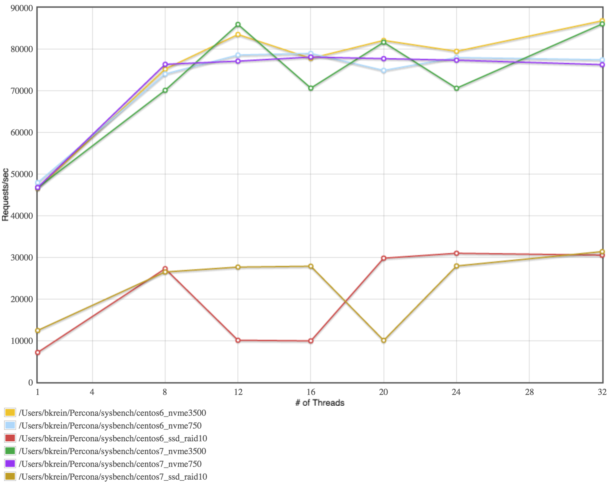

| 1, 8, 12, 16, 20, 24, 32 | 40GB | 16k | Random Writes |

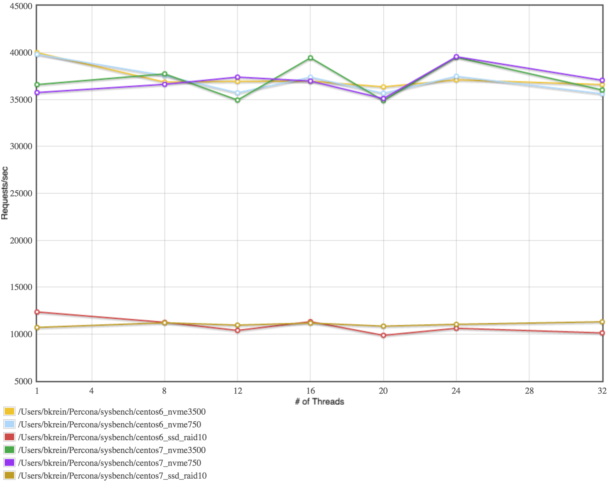

| 1, 8, 12, 16, 20, 24, 32 | 40GB | 16k | Sequential Writes |

| 1, 8, 12, 16, 20, 24, 32 | 40GB | 16k | Random Read-Write |

Findings

It was not surprising to find that the NVMe drives did significantly better than the 6-disk SSD RAID10 (~175-180% better) however, it was surprising that the difference between NVMe & the existing production SSD RAID10 wasn't so far apart (only ~30-50%). While 30-50% improvement is certainly significant it is a long way from the 175% difference seen on the new SSD RAID.

Upon further consideration of the differences, it was decided that the RAID card itself was providing a dramatic performance improvement to the existing system through the use of write back caching. While caching isn't useful for NVMe due to the way it handles the IO, it clearly makes a huge difference in SATA/SAS performance.

In the new servers we found that the P3500 drives performed best at 16 concurrent IO threads while the 750 Series performed slightly better with 8 threads. This could be due to limitations in the system itself. Perhaps that's something we can explore in more depth another day.

We are currently working with CentOS 6.x which is getting a bit long in the tooth with a 2.6.x kernel. Newer 3.x kernels include improvements to the NVMe drivers which boost performance even more. Consider migrating to newer kernels for production systems if possible to get the best performance possible with this emerging technology.

| Volume | Threads | Filesize | Blocksize | Test | Reads | Writes | Throughput | IOPS | Execution Time |

|---|---|---|---|---|---|---|---|---|---|

| SSD M4 512GB | 1 | 4G | 4kb | Seq RW | 0 | 10000 | 242.18Mb/sec | 61997.70 | 0.1613s |

| SSD M4 512GB | 8 | 40G | 16kb | Random RW | 5999 | 4001 | 758.8MB/sec | 48563.41 | 0.2059s |

| SSD M4 512GB | 12 | 40G | 16kb | Random RW | 5996 | 4004 | 752.31Mb/sec | 48147.59 | 0.2077s |

| SSD M4 512GB | 16 | 40G | 16kb | Random RW | 6000 | 4000 | 706.35Mb/sec | 45206.47 | 0.2212s |

| SSD M4 512GB | 20 | 40G | 16kb | Random RW | 6000 | 4000 | 693.71Mb/sec | 44397.20 | 0.2252s |

| SSD M4 512GB | 24 | 40G | 16kb | Random RW | 5988 | 4012 | 672.89Mb/sec | 43064.83 | 0.2322s |

Conclusions

While these basic benchmark tests are far from comprehensive, they give a rough idea of how the drives compare to each other showing that NVMe clearly has a significant performance boost over either of the SSD options.

The cost difference between the Intel P3500 which is a lower-end drive with lower MTBF and the 750 Series is relatively small (about $300) which makes the 750 a more attractive drive. The 750 also performed roughly 30% better than the P3500 as well which makes it a more attractive option.

We definitely look forward to migrating to these new servers & spending more time with Sysbench & other tools to dive deeper into performance benchmarking in the future. For now, we're excited by this new storage technology & look forward to how it will advance over time.